Visualization Overview

Posted: 2017-03-25 Filed under: Coding, General, Workshop, WPF Leave a commentThe Visualization Overview section is now available in my growing tuning series. I struggled with being somewhere between a catalog of functionality (and going too deep), an API overview (and being too shallow), and a design justification (and getting too long-winded).

Ultimately, the idea of the system overviews is to provide enough information so that the tuning pages I’m going to create have some context on what I’m tuning without me having to do it in-line and detract from the actual tuning content.

Splash of Color

Posted: 2016-01-27 Filed under: General, Guildsmanship, Workshop, WPF Leave a commentPutting all the fragments together gives the basic shape of a meta-model, but doesn’t explain how to get to this:

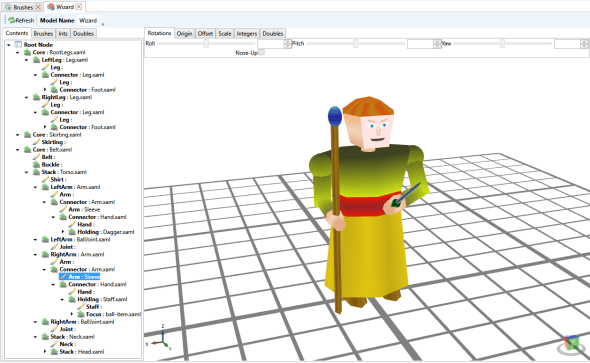

The first thing to do is to define the set of brushes that can be used to paint the miniature. This is done by opening the “brushes” node for the meta-model in the package explorer.

Resulting in the brushes editor:

From within it is possible to add colors, images, gradients and stacks. Colors are pretty self-explanatory, they are solid colors. Images are images available to the model either bound directly to the part, or referenced as a resource in the package. Gradients are linear gradients based on WPF gradient brushes. Stacks are sets of brushes that are overlain on top of each other (assuming that the upper brushes have some transparency. Stacks allow multiple directionalities to gradients within a brush.

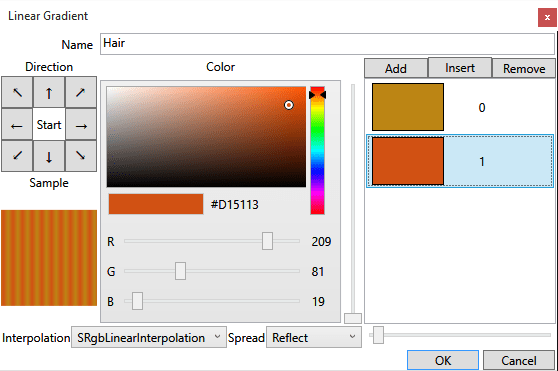

Next, opening a gradient brush shows the gradient editor:

The directionality of the gradient is selected via the array of buttons with arrows. The gradient stops are modified in the list box and buttons on the right side. The slider to the left of the gradient stop list box specifies the gradient stop relative location for the currently selected gradient in the list. The striping effect of the gradient is controlled by the “spread” combo and the slider next to the combo (which determines how the pattern repeats when it ends and how often the pattern repeats).

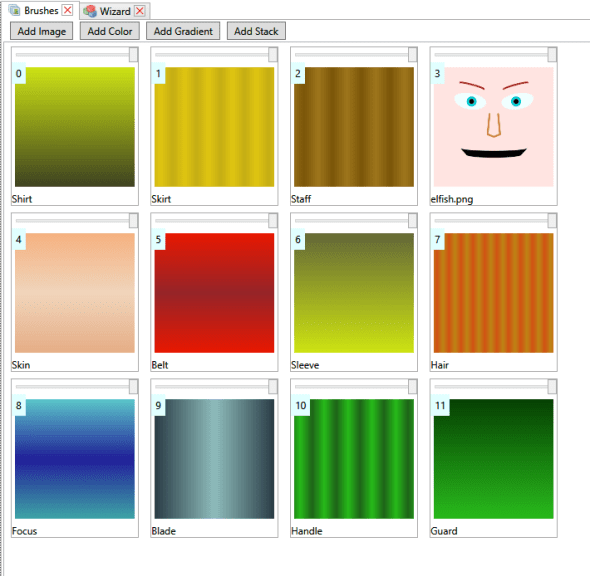

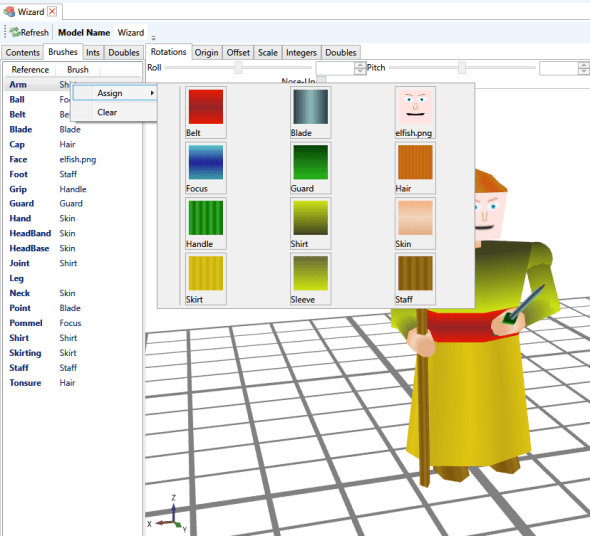

Now, to the actual painting back in the meta-model editor.

The “brushes” tab lists all the named areas that can have brush references mapped, and the currently mapped reference. Right-clicking one and selecting “assign” brings up a visual list of all the brushes that can be applied.

These references are the “global” (for the meta-model) brush references. It is possible to apply variations to individual fragment instances; and this applies to brushes as well as “parameters” such as lengths and radii, and to place more than one fragment in a reference connector.

For example, on the left arm, the first “arm.xaml” segment connected to the torso doesn’t have anything mapped for the “arm” brush, but the “arm.xaml” mapped in it’s “connector” has it’s “arm” brush overridden to be sleeve, which is effectively a reverse direction of the “shirt” brush so that the visual jump at the elbow isn’t so jarring.

Also, I mapped another segment “balljoint.xaml” to the left-arm slot on the torso to create the slightly bulging shoulders.

Finally, to reduce the relative thickness of the forearms, I select the forearm segment, switch to the “doubles” tab, unselect the “yield” checkbox for arm-diameter and freely adjust the diameter.

Some Assembly Required

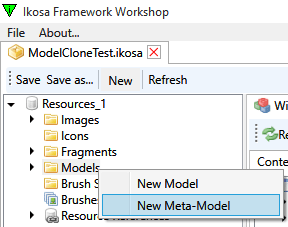

Posted: 2015-12-03 Filed under: Workshop, WPF Leave a commentThis post I’ll demonstrate a constructing a meta-model; a model made out of little fragments of 3D models snapped together, scaled and rotated. I’ll follow up with a model painting post. Somehow I have managed to emulate all the tedium and time-consuming nature of painting miniatures for table-top RPGs, and put it into software, while retaining the tedium and time-consuming nature of working with miniatures. It’s actually not that bad since I created a “duplicate miniature” function.

Anyway, within a resource manager, we need to create a new meta-model and base it off a XAML file that has “fragment references” (I use one called RootBase.xaml). Fragment references are a XAML extension I created that indicate where a fragment can be included in a model. The technique is recursive so that fragments themselves can contain fragment references. Think of a fragment reference as a placeholder.

<Model3DGroup xmlns="http://schemas.microsoft.com/winfx/2006/xaml/presentation"

xmlns:uzi="clr-namespace:Uzi.Visualize;assembly=Uzi.Visualize">

<Model3DGroup.Children>

<Model3DCollection>

<uzi:FragmentReference Key="Core" OffsetZ="{uzi:DoubleVal Key=CoreElev,Value=2.1,MinValue=0,MaxValue=5}"/>

</Model3DCollection>

</Model3DGroup.Children>

</Model3DGroup>

When we open the meta-model in the editor (built on the Helix Toolkit) we can see that it is empty. Evidence that there is a reference in the file shows in the “Root Node” of the tree-view. There is a single reference called “Core” which is a placeholder (literally, a location reference point about 2.1 feet above {0,0,0}), that can be adjusted in a variety of ways.

Since my resource package already has my collection of fragments included, I only need to assign parts to begin constructing the meta-model. In the following image I’ve assigned “RootLegs.xaml” to the Core reference. This provided two more reference points, one for each leg, which I went ahead and assigned “Leg.xaml” to each. Leg.xaml has a connector reference so we can build downward to another leg part, and then a foot part. It also has a VisualEffectMaterial called “Leg” which is a texture reference to be explained a little more in another post.

What I also did was Cloned the Core reference so I could get a second fragment anchored to the same spot. When cloning, I have to supply the fragment that will be held in that spot; in this case I chose “Belt.xaml“. Belt has a Buckle reference at the front, and a Stack reference onto which a torso is usually placed.

After a bit more of this, I get pretty close to a complete (unpainted) miniature.

I left the right hand off, so now I assign it.

Knowing the hand is a little bit big, I need to adjust it down. The hand model contained another extension called a DoubleRef, which provides me a way to adjust it in the workshop. Actually, the entire conic mesh is programmatically generated (and cached) via a markup extension as well!

<GeometryModel3D Material="{uzi:VisualEffectMaterial Key=Hand, VisualEffect={uzi:SenseEffectExtension}}"

Geometry="{uzi:ConeMesh CacheKey=1, Origin={uzi:Point3DVal}, Direction={uzi:Vector3DVal Z=-1}, Height={uzi:DoubleVal Key=HandLength,Value=0.4},BaseRadius={uzi:DoubleVal Key=WristRadius,Value=0.18},TopRadius={uzi:DoubleVal Key=FingerRadius,Value=0.12},ThetaDiv={uzi:IntVal Key=HandTheta,Value=8},BaseCap=true,TopCap=true}">

Both the “Doubles” tab and the “Ints” tab control the value of all references to the named integers and doubles in the miniature. ArmTheta, for instance, controls the number of “sides” for an arm cylinder. You can guess what the other ones do.

I have a separate utility to construct head models, I’ll explain that sometime if I get around to it

Next I put a staff in the wizard’s hand. Naturally this doesn’t orient and position neatly into my customized model.

So, I select the staff, and adjust the pitch. I had been adjusting the rest of the model to this point in a similar way, and gave the right arm a slight downward pitch, which I have to overcompensate for by applying a 95 instead of a 90 degree pitch.

Also, the staff is running a little high, so I adjust the Z-Origin up a bit; origins are relative to the fragment reference point for the fragment. Offsets are in “final” model-space and can be more tricky to work with. I also assigned a fragment to the “Focus” reference of the staff fragment.

I fix this by Rolling the staff fragment 180 degrees, then I adjust the scale of the Ball fragment (since I don’t have separate radius parameters for a sphere mesh).

Next time (perhaps), painting a miniature.

Slot Machine…

Posted: 2014-09-11 Filed under: Guildsmanship, WPF Leave a commentHere’s what I was really working on when I mentioned slots a few posts back. Workshop setup of spell slots. I’ll capture a picture of casting a spell in the client a little later.

Also, with about 10-15 minutes of time and existing model fragments, I put this together:

The Itsy Bitsy…

Posted: 2014-09-09 Filed under: Guildsmanship, WPF Leave a commentI’ve been meaning to put together the model fragments (and the whole model) for a spider or awhile. Ta da! Now I just need to get Climb Movement in the game engine fully implemented (and various web actions and effects) so the spider can be more menacing.

Misfire

Posted: 2014-07-29 Filed under: Debugging, Guildsmanship, WPF Leave a commentI have a targeting system and activity builder I’ve been working on to pick targets for actions. One target type that I’d been working on a lot over the last month was attack targeting. I’ve got it fairly well along, so I figured I’d go back and apply a similar design principle to awareness targeting.

Attack targeting requires making attack rolls and needs to know certain things about the type of attack such as whether the attack is with a hand held weapon in melee range versus a reach or ranged weapon, and the effective attack launch points. Awareness targeting requires a simple selection of creatures or objects of which one is aware. I already had a basic system that used all the things the actor is aware of in a drop-down list, but wanted it to work more like attack targeting in which if I pre-select items, they are the only things that show in the list.

Well I eventually got the two fairly similar, but hadn’t worked out all display characteristics for the selection list items. So I was testing and picked the first two items in the list for my two magic missile wand test. I hadn’t quite realized I had targeted myself until I didn’t see any missiles visibly flying to targets, but the tell-tale impact splash was centered on my camera.

Dutifully I rolled both missile damages, then watched as my display window went black…I had knocked myself unconscious and dying.

Yay!

A Bone to Pick

Posted: 2014-07-28 Filed under: General, WPF Leave a commentAlthough I’ve been doing a lot besides making models over the past month, I just thought I’d share this bit of skeleton with a working rib-cage. I’ve actually been working a bit on the targeting process, and will post some images of that in a bit, just want to make sure the auto-aiming of pre-selected attack targets is where I want it to be.

Skeleton Model

Posted: 2014-07-02 Filed under: Guildsmanship, WPF Leave a commentOK, no rib-cage yet, so I threw some kind of leather armor over him…

He’s mostly the result of testing out parameterized fragments, with a little bit of the skeleton face and head I produced a month or so ago. Working with Skeley has helped me fine-tune some things in model construction and see where some impedance barriers to smooth asset creation are.

The second picture is direct from the model editor, where the field of view is apparently slightly better. I also made it possible to swap roll and yaw axes on fragment nodes, so I could more easily roll the sword (which is oriented so that it rolls around the z Axis instead of the Y axis)

Also, he keeps me from working more on the targeting system in the client…

Heavily Nested MarkupExtensions

Posted: 2014-06-26 Filed under: Coding, WPF Leave a comment

<GeometryModel3D Material="{uzi:VisualEffectMaterial Key=Arm, VisualEffect={uzi:SenseEffectExtension}}"

Geometry="{uzi:CylinderMesh CacheKey=1,

P1={uzi:Point3DVal Z={uzi:DoubleSum A={uzi:DoubleProduct A={uzi:DoubleVal Key=Length,Value=0.75, MinValue=0.1, MaxValue=1.5}, B=-1}, B=-0.09}},

P2={uzi:Point3DVal Z=0.16},

Diameter={uzi:DoubleVal Key=Diameter,Value=0.4, MinValue=0.05, MaxValue=1.0},

ThetaDiv={uzi:IntVal Key=Theta,Value=16, MinValue=8, MaxValue=20},

BaseCap=true,TopCap=true}">

</GeometryModel3D>

This is a bit of the part for an Arm model fragment. I use a lot of markup extensions to get the GeometryModel3D to be flexible enough for composition and environment shading.

First, the Material is a VisualEffectMaterial, which uses the Key to work with the Meta-Model system to figure out which texture to apply. The VisualEffect is mapped to the standard SenseEffectExtension, so that creature senses can be applied at render time.

The next big piece is the CylinderMesh used to provide the Geometry. This uses the HelixToolkit’s MeshBuilder to define parameters for creating a cylinder mesh. The Point3DVals are extensions to defined points (obviously), and the DoubleVals and IntVals define parameters that can be overriden when composing in a MetaModel. Lastly, the DoubleProduct (there’s also a DoubleSum) define mathematical functions on DoubleVals so that a same parameter can be re-used in multiple places as a factor (or sum) of other values.

3D Bladed Weapons

Posted: 2014-06-15 Filed under: General, WPF Leave a commentFrom left to right, Greatsword, Bastard sword, longsword, short sword and dagger. They’re all using the same coloring schemes so only the sizes and parts vary.